The launch of the artificial intelligence (AI) large language model ChatGPT was met with both enthusiasm (“Wow! This tool can write as well as humans”) and fear (“Wow…this tool can write as well as humans”).

ChatGPT was just the first in a wave of new AI tools designed to mimic human communication via text. Since the launch of ChatGPT in November 2022, new AI chatbots have made their debut, including Google’s Bard and ChatGPT for Microsoft Bing, and new generative AI tools that use GPT technology have emerged, such as Chat with any PDF. Additionally, ChatGPT became more advanced – shifting from using GPT-3 to GPT-3.5 for the free version and GPT-4 for premium users.

With increasing access to different types of AI chatbots, and increasing advances in AI technology, “preventing student cheating via AI” has risen to the top of the list of faculty concerns for 2023 (Lucariello, 2023). Should ChatGPT be banned in class or should you encourage the use of it? Should you redesign your academic integrity syllabus statement or does your current one suffice? Should you change the way you give exams and design assignments?

As you grapple with the role AI plays in aiding student cheating, here are six key points to keep in mind:

- Banning AI chatbots can exacerbate the digital divide.

- Banning the use of technology for exams can create an inaccessible, discriminatory learning experience.

- AI text detectors are not meant to be used to catch students cheating.

- Redesigning academic integrity statements is essential.

- Students need opportunities to learn with and about AI.

- Redesigning assignments can reduce the potential for cheating with AI.

In the following section, I will discuss each of these points.

1. Banning AI chatbots can exacerbate the digital divide.

Sometimes when a new technology comes out that threatens to disrupt the norm, there is a knee-jerk reaction that leads to an outright ban on the technology. Just take a look at the article, “Here are the schools and colleges that have banned the use of ChatGPT over plagiarism and misinformation fears” (Nolan, 2023), and you will find several U.S. K-12 school districts, international universities, and even entire jurisdictions in Australia that quickly banned the use of ChatGPT after its debut.

But, banning AI chatbots “risks widening the gap between those who can harness the power of this technology and those who cannot, ultimately harming students’ education and career prospects” (Canales, 2023, para. 1). ChatGPT, GPT-3, and GPT-4 technology are already being embedded into several careers from law (e.g., “OpenAI-backed startup brings chatbot technology to first major law firm”) to real estate (“Real estate agents say they can’t imagine working without ChatGPT now”). Politicians are using ChatGPT to write bills (e.g., “AI wrote a bill to regulate AI. Now Rep. Ted Lieu wants Congress to pass it”). The Democratic National Committee found that the use of AI-generated content did just as well as, and sometimes better than, human-generated content for fundraising (“A Campaign Aide Didn’t Write That Email. A.I. Did”).

Ultimately, the “effective use of ChatGPT is becoming a highly valued skill, impacting workforce demands” (Canales, 2023, para. 3). College students who do not have the opportunity to learn when and how to use AI chatbots in their field of study will be at a disadvantage in the workforce compared to those who do – thus expanding the digital divide.

2. Banning the use of technology for exams can create an inaccessible, discriminatory learning experience.

It might be tempting to turn to low-tech options for assessments, such as oral exams and handwritten essays, as a way to prevent cheating with AI. However, these old-fashioned assessment techniques often create new barriers to learning, especially for disabled students, English language learners, neurodiverse students, and any other students that rely on technology to aid their thinking, communication, and learning.

Take, for example, a student with limited manual dexterity who relies on speech-to-text tools for writing, but instead is asked to hand write exam responses in a blue book. Or, an English language learner who relies on an app to translate words as they write essays. Or, a neurodiverse student who struggles with verbal communication and is not able to show their true understanding of the course content when the instructor cold calls them as a form of assessment.

Banning technology use and resorting to low-tech options for exams would put these students, and others who rely on technology as an aid, at a disadvantage and negatively impact their learning experience and academic success. Keep in mind that while some of the students in these examples might have a documented disability accommodation that requires an alternative form assessment, not all students who rely on technology as an aid for their thinking, communication, or learning have a documented disability to get the same accommodations. Additionally, exams that require students to demonstrate their knowledge right on the spot, like oral exams, may contribute to or intensify feelings of stress and anxiety and, thus, hinder the learning process for many, if not all, students (see “Why Your Brain on Stress Fails to Learn Properly”).

3. AI text detectors are not meant to be used to catch students cheating.

AI text detectors do not work in the same way that plagiarism checkers do. Plagiarism checkers compare human-written text with other human-written text. AI text detectors guess the probability that a text is written by humans or AI. For example, the Sapling AI Content Detector “uses a machine learning system (a Transformer) similar to that used to generate AI content. Instead of generating words, the AI detector instead generates the probability it thinks [emphasis added] each word or token in the input text is AI-generated or not” (2023, para. 7).

Let me repeat, AI text detectors are guessing whether a text is written by AI or not.

As such, many of the AI text detector tools specifically state that they should not be used to catch or punish students for cheating:

- “Our classifier has a number of important limitations. It should not be used as a primary decision-making tool, [emphasis added] but instead as a complement to other methods of determining the source of a piece of text” (OpenAI; Kirchner et al., 2023, para. 7).

- “The nature of AI-generated content is changing constantly. As such, these results should not be used to punish students [emphasis added]. While we build more robust models for GPTZero, we recommend that educators take these results as one of many pieces in a holistic assessment of student work” (GPTZero homepage).

- “No current AI content detector (including Sapling’s) should be used as a standalone check to determine whether text is AI-generated or written by a human. False positives and false negatives will regularly occur” (Sapling AI Content Detector homepage).

In an empirical review of AI text generation detectors, Sadasivan and colleagues (2023) found “that several AI-text detectors are not reliable in practical scenarios” (p. 1). Additionally, the use of AI text detectors can be particularly harmful for English language learners, students with communication disabilities, and others who were taught to write in a way that matches AI-generated text or who use AI chatbots to improve the quality and clarity of their writing. Gegg-Harrison (2023) shared this worry:

My biggest concern is that schools will listen to the hype and decide to use automated detectors like GPTZero and put their students through ‘reverse Turing Tests,’ and I know that the students that will be hit hardest are the ones we already police the most: the ones who we think ‘shouldn’t’ be able to produce clear, clean prose of the sort that LLMs generate. The non-native speakers. The speakers of marginalized dialects (para. 7).

Gegg-Harrison

Before you consider using an AI text detector to identify potential instances of cheating, take a look at this open access AI Text Detectors slide deck, which was designed to support educators in making an informed decision about the use of these tools in their practice.

4. Redesigning academic integrity statements is essential.

AI chatbots have elevated the importance of academic integrity. While passing AI-generated text off as human-generated seems like a clear violation of academic integrity, what about using AI chatbots to revise text to improve the writing quality and language? Or, what about using AI chatbots to generate reference lists for a paper? Or, how about using an AI chatbot to find errors in a code to make it easier to debug the code?

Students need to have opportunities to discuss what role AI chatbots, and other AI tools, should and should not play in their learning, thinking, and writing. Without these conversations, people and even organizations are left trying to figure this out on their own, often at their own expense or the expense of others. Take for example the mental health support company Koko which decided to run an experiment on users seeking emotional support by augmenting, and in some cases replacing, human-generated responses with GPT-3 generated responses. When users found out that the responses they received were not entirely written by humans they were shocked and felt deceived (Ingram, 2023). Then, there was the lawyer who used ChatGPT to create a legal brief for the Federal District Court, but was caught for doing so because the brief included fake judicial opinions and legal citations (Weiser & Schweber, 2023). It seems like everyone is trying to figure out what role ChatGPT and other AI chatbots might play in generating text or aiding writing.

College courses can be a good place for starting conversations about academic integrity. However, academic integrity is often part of the hidden curriculum – something students are expected to know and understand, but not explicitly discussed in class. For example, faculty are typically required to put boilerplate academic integrity statements in their syllabi. My university requires the following text in every syllabus:

Since the integrity of the academic enterprise of any institution of higher education requires honesty in scholarship and research, academic honesty is required of all students at the University of Massachusetts Amherst. Academic dishonesty is prohibited in all programs of the University. Academic dishonesty includes but is not limited to: cheating, fabrication, plagiarism, and facilitating dishonesty. Appropriate sanctions may be imposed on any student who has committed an act of academic dishonesty. Instructors should take reasonable steps to address academic misconduct. Any person who has reason to believe that a student has committed academic dishonesty should bring such information to the attention of the appropriate course instructor as soon as possible. Instances of academic dishonesty not related to a specific course should be brought to the attention of the appropriate department Head or Chair. Since students are expected to be familiar with this policy and the commonly accepted standards of academic integrity, ignorance of such standards is not normally sufficient evidence of lack of intent. [emphasis added] (University of Massachusetts Amherst, 2023).

While there is a detailed online document describing cheating, fabrication, plagiarism, and facilitating dishonesty, it is unlikely that students have been given the time to explore or discuss that document; and the document has not been updated to include what these behaviors might look like in the era of AI chatbots. Even still, students are expected to demonstrate academic integrity.

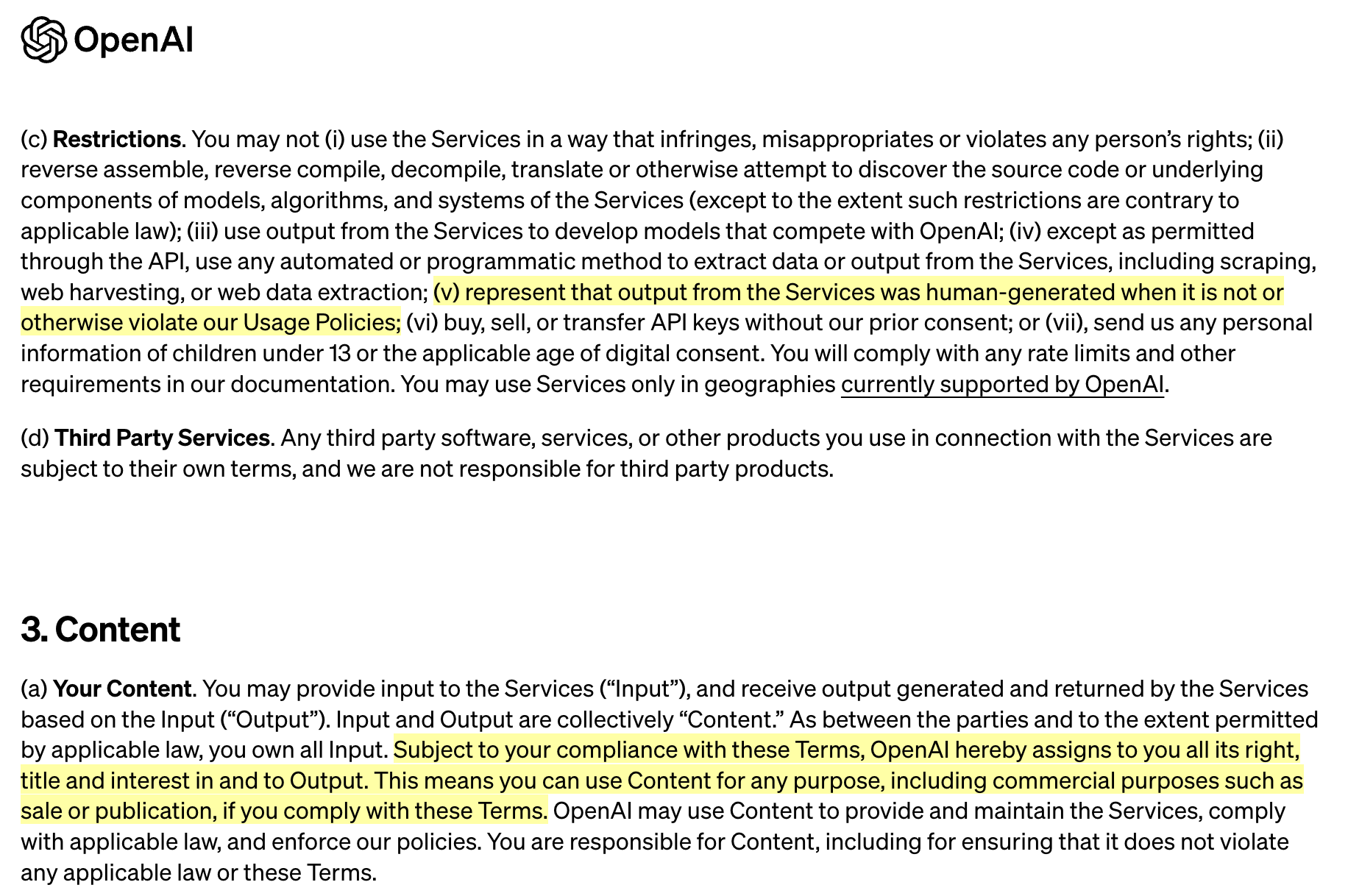

What makes this even more challenging is that if you look at OpenAI’s Terms of Use, it states that users own the output (anything they prompt ChatGPT to generate) and can use the output for any purpose, even commercial purposes, as long as they abide by the Terms. However, the Terms of Use also state that users cannot present ChatGPT generated text as human-generated. So, turning in a fully ChatGPT-written essay is a clear violation of the Terms of Use (and considered cheating), but what if students only use a few ChatGPT-written sentences in an essay? Or, use ChatGPT to rewrite some of the paragraphs in a paper? Are these examples a violation of the OpenAI Terms of Use or Academic Integrity?

Students need opportunities to discuss the ethical issues surrounding the use of AI chatbots. These conversations can, and should, start in formal education settings. Here are some ways you might go about getting these conversations started:

- Update your course academic integrity policy in your syllabus to include what role AI technologies should and should not play and then ask students to collaboratively annotate the policy and offer their suggestions.

- Invite students to co-design the academic integrity policy for your course (maybe they want to use AI chatbots for helping with their writing…Or, maybe they don’t want their peers to use AI chatbots because that provides an advantage to those who use the tools!).

- Provide time in class for students to discuss the academic integrity policy.

If you are in need of example academic integrity statements to use as inspiration, check out the Classroom Policies for AI Generative Tools document curated by Lance Eaton.

5. Students need opportunities to learn with and about AI.

There are currently more than 550 AI startups that have raised a combined $14 billion in funding (Currier, 2022). AI will be a significant part of students’ futures; and as such, students need the opportunity to learn with and about AI.

Learning with AI involves providing students with the opportunity to use AI technologies, including AI chatbots, to aid their thinking and learning. While it might seem like students only use AI chatbots to cheat, in fact, they are more likely using AI chatbots to help with things like brainstorming, improving the quality of their writing, and personalized learning. AI can aid learning in several different ways, including serving as an “AI-tutor, AI-coach, AI-mentor, AI-teammate, AI-tool, AI-simulator, and AI-student” (Mollick & Mollick, 2023, p. 1). AI chatbots can also provide on-demand explanations, personalized learning experiences, critical and creative thinking support, reading and writing support, continuous learning opportunities, and reinforcement of core knowledge (Nguyen et al., 2023; Trust et al., 2023). Tate and colleagues (2023) asserted that the use of AI chatbots could be advantageous for those who struggle to write well, including non-native speakers and individuals with language or learning disabilities.

Learning about AI means providing students with the opportunity to critically interrogate AI technologies. AI chatbots can provide false, misleading, harmful, and biased information. They are often trained on data “scraped” (or what might be considered “stolen”) from the web. The text they are trained on privileges certain ways of thinking and writing. These tools can serve as “misinformation superspreaders” (Brewster et al., 2023). Many of these tools make money off of free labor or cheap foreign labor. Therefore, students need to learn how to critically examine the production, distribution, ownership, design, and use of these tools in order to make an informed decision about if and how to use them in their field of study and future careers. For instance, students in a political science course might examine the ethics of using an AI chatbot to create personalized campaign ads based on demographic information. Or, students in a business course might debate whether companies should require the use of AI chatbots to increase productivity. Or, students in an education course might investigate how AI chatbots make money by using, selling, and sharing user data and reflect upon whether the benefits of using these tools outweigh the risks (e.g., invading student privacy, giving up student data).

Two resources to help you get started with helping students critically evaluate AI tools are the Civics of Technology Curriculum and the Critical Media Literacy Guide for Analyzing AI Writing Tools.

6. Redesigning assignments can reduce the potential for cheating with AI.

Students are more likely to cheat when there is a stronger focus on scores (grades) than learning (Anderman, 2015), there is increased stress, pressure, and anxiety (Piercey, 2020), there is a lack of focus on academic integrity, trust, and relationship building (Lederman, 2020), the material is not perceived to be relevant or valuable to students (Simmons, 2018), and instruction is perceived to be poor (Piercey, 2020).

Torrey Trust, PhD, is an associate professor of learning technology in the Department of Teacher Education and Curriculum Studies in the College of Education at the University of Massachusetts Amherst. Her work centers on the critical examination of the relationship between teaching, learning, and technology; and how technology can enhance teacher and student learning. In 2018, Dr. Trust was selected as one of the recipients for the ISTE Making IT Happen Award, which “honors outstanding educators and leaders who demonstrate extraordinary commitment, leadership, courage and persistence in improving digital learning opportunities for students.”

References

Anderman, E. (2015, May 20). Students cheat for good grades. Why not make the classroom about learning and not testing? The Conversation. https://theconversation.com/students-cheat-for-good-grades-why-not-make-the-classroom-about-learning-and-not-testing-39556

Brewster, J., Arvanitis, L., & Sadeghi, M. (2023, January). The next great misinformation superspreader: How ChatGPT could spread toxic misinformation at unprecedented scale. NewsGuard. https://www.newsguardtech.com/misinformation-monitor/jan-2023/

Canales, A. (2023, April 17). ChatGPT is here to stay. Testing & curriculum must adapt for students to succeed. The 74 Million. https://www.the74million.org/article/chatgpt-is-here-to-stay-testing-curriculum-must-adapt-for-students-to-succeed/

CAST (2018). Universal Design for Learning Guidelines version 2.2. http://udlguidelines.cast.org

Currier, J. (2022, December). The NFX generative tech market map. NFX. https://www.nfx.com/post/generative-ai-tech-market-map

Gegg-Harrison, W. (2023, Feb. 27). Against the use of GPTZero and other LLM-detection tools on student writing. Medium. https://writerethink.medium.com/against-the-use-of-gptzero-and-other-llm-detection-tools-on-student-writing-b876b9d1b587

GPTZero. (n.d.). https://gptzero.me/

Ingram, D. (2023, Jan. 14). A mental health tech company ran an AI experiment on real users. Nothing’s stopping apps from conducting more. NBC News. https://www.nbcnews.com/tech/internet/chatgpt-ai-experiment-mental-health-tech-app-koko-rcna65110

Kirchner, J.H., Ahmad, L., Aaronson, S., & Leike, J. (2023, Jan. 31). New AI classifier for indicating AI-written text. OpenAI. https://openai.com/blog/new-ai-classifier-for-indicating-ai-written-text

Lederman, D. (2020, July 21). Best way to stop cheating in online courses? Teach better. Inside Higher Ed. https://www.insidehighered.com/digital-learning/article/2020/07/22/technology-best-way-stop-online-cheating-no-experts-say-better

Lucariello, K. (2023, July 12). Time for class 2023 report shows number one faculty concern: Preventing student cheating via AI. Campus Technology. https://campustechnology.com/articles/2023/07/12/time-for-class-2023-report-shows-number-one-faculty-concern-preventing-student-cheating-via-ai.aspx

Mollick, E., & Mollick, L. (2023). Assigning AI: Seven approaches for students, with prompts. ArXiv. https://arxiv.org/abs/2306.10052

Nguyen, T., Cao, L., Nguyen, P., Tran, V., & Nguyen P. (2023). Capabilities, benefits, and role of ChatGPT in chemistry teaching and learning in Vietnamese high schools. EdArXiv. https://edarxiv.org/4wt6q/

Nolan, B. (2023, Jan. 30). Here are the schools and colleges that have banned the use of ChatGPT over plagiarism and misinformation fears. Business Insider. https://www.businessinsider.com/chatgpt-schools-colleges-ban-plagiarism-misinformation-education-2023-1

Piercey, J. (2020, July 9). Does remote instruction make cheating easier? UC San Diego Today. https://today.ucsd.edu/story/does-remote-instruction-make-cheating-easier

Sapling AI Content Detector. (n.d.). https://sapling.ai/ai-content-detector

Tate, T. P., Doroudi, S., Ritchie, D., Xu, Y., & Uci, M. W. (2023, January 10). Educational research and AI-generated writing: Confronting the coming tsunami. EdArXiv. https://doi.org/10.35542/osf.io/4mec3

Simmons, A. (2018, April 27). Why students cheat – and what to do about it. Edutopia. https://www.edutopia.org/article/why-students-cheat-and-what-do-about-it

Sinha, T., & Kapur, M. (2021). When problem solving followed by instruction works: Evidence for productive failure. Review of Educational Research, 91(5), 761-798.

Trust, T., Whalen, J., & Mouza, C. (2023). ChatGPT: Challenges, opportunities, and implications for teacher education. Contemporary Issues in Technology and Teacher Education, 23(1), 1-23.

University of Massachusetts Amherst. (2023). Required syllabi statements for courses submitted for approval. https://www.umass.edu/senate/content/syllabi-statements

Weiser, B. & Schweber, N. (2023, June 8). The ChatGPT lawyer explains himself. The New York Times. https://www.nytimes.com/2023/06/08/nyregion/lawyer-chatgpt-sanctions.html